Choice in the Context of Hypernudging: Rethinking Autonomy in Recommendation Systems

Author Name

Clara Silwal

Published On

January 29, 2026

Keywords/Tags

hypernudging, microtargeting, first/second order desires, autonomy, choice architecture

1. Introduction

The personalization of digital experiences has introduced a paradigm wherein users can dynamically engage with choice contexts designed to predict their individual desires (Lanzing, 2019; Yeung, 2017). Amidst the abundance of resonating choices available within their particular choice contexts lies a subtle challenge to their autonomy. By using Frankfurt and Dworkin’s hierarchical frameworks on autonomy, this paper explores how microtargeting and hypernudging constitute a threat to autonomy through the obstructions to the executions of second order volitions and the impingements on procedural and substantive independence.

In this paper, I will first outline the definition of autonomy and explore its nuances in section 2. In particular, I will explore Frankfurt’s conception of autonomy with respect to first order and second order desires, and Dworkin’s framework on autonomy with special consideration to substantive and procedural independence. I will then shift to examining the architecture of recommendations algorithms in section 3. I shall rely on the work of Karen Yeung in defining hypernudging, and extrapolate Stuart Mills’ framework on the burdens of hypernudging. This exploration will be in the backdrop of the concept of microtargeting and data doubles, with added attention towards the inner working of recommendation algorithms to bring out how their internal structures affect hypernudging and, consequently, the user’s autonomy. In section 4, I shall offer my original analysis of how the architecture of recommendation algorithms, through the mechanisms of hypernudging and microtargeting, will impinge on the user’s autonomy as defined by Frankfurt’s and Dworkin. Finally, in section 5, I shall offer some critiques that may be raised against this paper and my counters to them.

2. Hierarchical Definitions of Autonomy

In this section, I will elaborate on the definitions of autonomy as introduced by Frankfurt and Dworkin. Both Frankfurt and Dworkin have a hierarchical notion of desires. Frankfurt focuses on the interaction between the desires in the hierarchy and their enactment, while Dworkin focuses more on an agent’s reflection to enact the desire.

2.1 Frankfurt’s account of First and Second Order Desires

Frankfurt’s model of autonomy postulates that an agent’s autonomous will is exerted in the alignment of their first order desires with their second order volitions (Frankfurt, 2001). I will elaborate on the definitions of first and second order desires and volitions as coined by him. Furthermore, I will outline some possible outlier cases which might emerge out of this classification, as they will be important in section 4.

Let us consider the statement: Agent A has a desire for X. According to Frankfurt, this statement in itself is inadequate in the concise encapsulation of the nature of A’s desire for X, as it could garner multiple conflicting elaborations. The statement “A has a desire for X” would not have sufficient information on the strength of A’s desire to do X, or whether they have conflicting desires which are stronger than X. It could also mean that upon further deliberation on this desire, they would no longer want X. In this case, X is a first order desire. If A wants to enact X, then X is an effective first order desire. Furthermore, let us consider the statement: A has a desire for the desire for X. In this case, X is a second order desire. If A has the will to enact this desire, then it is a second order volition. It is important to note that A could have a second order desire for X, but not a second order volition.

Using an example to elucidate the point, the desire for a social media user to click on a targeted ad of a product which upon reflection, they would have no use or desire for, would be a first order desire. If they purchased the product based on this initial desire, it would be an effective first order desire. If, however, they had a desire for their desire for the product, then it would be a second order desire. In other words, if they endorsed their first order desire for the product after reflection and deliberation, then it would be a desire of the second order. Purchasing the product after realizing that it is a second order desire would be a second order volition. According to Frankfurt, this enactment of their second order volition would be an example of an autonomous action.

There are a few edge-cases to consider with this structure. Firstly, it is possible that there could arise situations where an agent has multiple second order desires which contradict each other. If the conflict between these desires are strong enough, there emerges a risk of no second order volition being strong enough to be enacted.1 The second edge case to emerge from his hierarchical structure is the case where higher order desires could emerge as a result of an agent’s lack of identification with her lower order desires. A decisive identification with their desires is a requirement for the enactment of an agent’s will or volition. If an agent does not identify with any second (or higher) order volitions, Frankfurt classifies them as non-autonomous agents. These edge cases will be poignant in the analysis in section 4.

2.2 Dworkin’s theorem for Authenticity and Independence

Similar to Frankfurt’s depiction of autonomy, Dworkin’s autonomy with respect to authenticity can be understood as a hierarchical order of endorsements of desires. “Autonomy cannot be located on the level of first-order consideration but in the second-order judgments we make concerning first-order considerations. (…)The autonomous individual is able to step back and formulate an attitude towards the factors that influence [their] behavior.” (Dworkin, 1976)

This reminds me of Sylvia Plath’s famous fig tree metaphor, where she has so many choices regarding her future that she cannot choose one while abandoning all the rest; and she watches all the choices wither away in her indecision. (Plath, 2005)

In addition to the hierarchical depiction of autonomy, Dworkin also has a second element of independence which he claims should be considered. He postulates that autonomy = authenticity + independence. In order for an agent’s decision to be autonomous, it needs to be authentic (“[their]”) and independent (“own”) (Dworkin, 1976). An agent is autonomous with respect to authenticity if they have considered their motivations for themselves, and have assessed that they endorse their desire to identify with them. Similarly, an agent is autonomous with respect to independence if their motivations are procedurally and substantively independent.

An individual has procedural independence if their motivations for their desires are not the result of an inability to “rationally and critically view a situation” (Dworkin, 1976). The motivations for those desires should not be a result of “manipulation or deception”. For example, if an agent is motivated to sign a contract, then they should have access to all the information regarding the details of this contract, along with the ability to rationally critique and analyze their opinions on the contract themselves, without external deception or coercion.

In contrast, an individual is autonomous with respect to substantive independence if in making a decision, they are not “renouncing their independence of action or thought” (Dworkin, 1976). For an agent to be substantively independent, their motivations should be influenced by their own thoughts and actions, as opposed to being a consequence of passively undertaking an external entity’s motivations and value structures. For example, let us consider a scenario where a person is in a part of a social group where each member owns a particular item, which the person would not have wanted to own if they had reflected on it independently. If the person purchases it regardless, then their motivations would not be a consequence of substantive independence.

3. Microtargeting and Hypernudging in Recommendation Systems

While autonomy is a theory on making choices, microtargeting and hypernudging are systems made to influence those choices. Since they are dominant forces in the thesis of this paper, some time is taken to explore these concepts. This section elaborates on some details of microtargeting and hypernudging in relation to recommendation algorithms. First, I will outline data doubles and microtargeting, and then I will explain hypernudging. This section will conclude with some explanations of the burdens of hypernudging.

3.1 Data Doubles and Microtargeting

Data doubles are digitized codifications of ourselves, often reassembled, aggregated, and categorized by methods of statistical reasoning (Bouk, 2017). As phrased by Haggerty and Ericson, they can be intuited as the discrete “abstractions” of the human self composed of “pure information” (Haggerty & Ericson, 2001). Data doubles paired with statistical analysis can reveal insightful patterns regarding an individual’s habits, behaviors, or preferences.

With the commodification of data doubles and the advent of the big data economy, there emerge practical applications of the results of tracking, creating, and analyzing data doubles and aggregates (Bouk, 2017). In the context of recommendation systems, which will be the focus of this paper, this commodification is particularly pertinent (Lanzing, 2019). When a user profile, or their data double, is created based on multiple trackable metrics which are digitized with or without their explicit consent, it is then viable to recommend items to them by analyzing which items align with their data double (Bouk, 2017). In order to conceptualize how the analysis of data doubles is pertinent in the nature of recommendations, it is important to understand how these systems function. Due to the intricacies of the algorithm, I will provide a short chronological account of their evolution in terms of algorithmic sophistication and predictive accuracy.

One of the earliest methodologies for the utilization of data doubles for recommendations has been through a methodology called “doppelgänger logic” as signified by Bernard Harcourt (Harcourt, 2015). It relies on the aggregation of a network of data doubles which have similar metrics. When similar data doubles which “mirror” each other are aggregated, their interests and preferences could be recommended to each other, predicting the desires of the users while signal boosting the items themselves (Harcourt, 2015). A slightly more sophisticated methodology for it is Matrix Factorization, an algorithm which vectorizes data doubles and recommendation items, and embeds them in a latent vector space such that their correlation could pertain to a successful recommendation (Koren et al., 2009). Modern recommendation systems push these methods further using tools such as neural networks or deep learning models, which can have a large number of parameters due to their layered architecture and trainable weights (Seaver, 2022).

Data doubling and aggregation has widespread usage in social media algorithms in the context of recommendation systems. For example, Spotify has a “daylist” feature as of 2024, where users get recommended a series of “highly specific, dynamic, and hyper-personalized” playlists algorithmically curated on the basis of which micro-genres they listen to during particular time intervals over the period of a week; and predicting which playlist would appeal the most to them in the present moment (Goldrick, 2023). This is an example of micro-targeting, a phenomenon where individuals are meticulously profiled and targeted based on the predictions of what they would like to consume or identify with at any given moment. As per Spotify’s statement, “the playlist helps you understand more about your taste in music—and express your unique audio identity.” (Goldrick, 2023) As the predictive modeling algorithms used for micro-targeting increase in accuracy, so will the identification of users with the recommended content.

3.2 Hypernudging

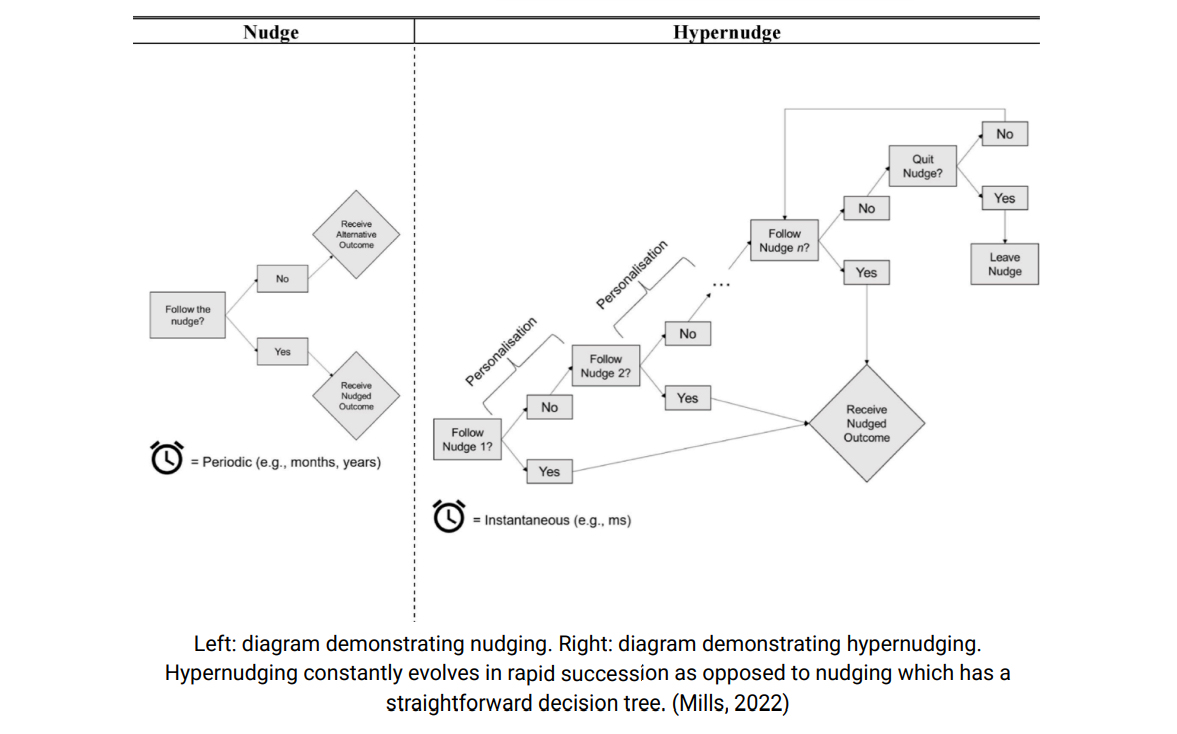

If microtargeting within the context of recommendation systems is the phenomenon through which a user is profiled in granular detail for the purposes of content recommendation, hypernudging is the methodology through which the user’s decision making is guided. The term “hypernudging” is defined by Karen Yeung as the phenomenon through which individual decision making is subtly shaped through multiple small but cumulative “nudges”, with the careful rearrangement of a user’s choice context through the analysis of big data (Yeung, 2017). The concept of hypernudges builds on the concept of a “nudge” as introduced by Thaler and Sunstein, which is a phenomenon where an individual’s behavior could be altered in a predictable way through careful organization of the choices available to them, without perceptively limiting any options. (Thaler, 2021) Huypernudges are crafted through careful and dynamic choice architecture, where choices are arranged in such a way that an individual in any particular choice context can be subtly guided (“nudged”) to prefer a particular set of choices over the other. This relies on the exploitation of our cognitive biases, and the passive, heuristically oriented, and subconscious nature of the decision making process of an individual (Kahneman, 2011).

Yeung outlines three elements for the configuration of a hypernudge. Firstly, an individual’s choice context is tailored in accordance with the analysis of her data double. Secondly, their interactions with their choice context are noted and analyzed by choice architects (in this paper, the choice architect is the recommendation algorithm). Thirdly, their choice context is designed to align in accordance with the population-wide trends within a broader social context. (Yeung, 2017) Stuart Mills further expands on this ontology by depicting a hypernudge as a series of nudges which dynamically modify themselves over a period of time. He distinguishes four traits of hypernudging: personalization, real time (re)configuration, predictive capacity, and hiddenness (Mills, 2022).Through the elusive and dynamic re-interpolation of choice contexts as determined by predictive algorithms tailored to each individual, users can be “hyper-nudged” into preferred directions as mapped by the choice architects.

This phenomenon is particularly prevalent in recommendation algorithms, where each user is presented with a micro-targeted choice context which they are predicted to resonate with, and every interaction is an impetus for the elusive re-configuration of her choice context into a new hypernudge.

With the elusive design of hypernudges, Mills outlines three burdens of hyper-nudging which one can encounter as a decision maker in a choice context: the burdens of avoidance, understanding, and experimentation (Mills, 2022).

1. The burden of avoidance: As a decision maker makes a choice within a curated choice context, the choice context is consequently adjusted and reintroduced in real time, prompting her for another choice. Since there is no theoretical limit to the number of nudges linked in a hyper-nudge, the influence of hypernudges cannot be avoided as long as the decision maker is interacting within a choice context in a hypernudge.

2. The burden of understanding: Hypernudges and choice contexts are often a result of abstract algorithms with a large number of unknown parameters. In addition, it is non trivial to ascertain what decision makers are being nudged for, making it difficult to understand the ways in which they are being motivated in the choice context.

3. The burden of experimentation: It is difficult to ascertain what the hypernudging system is optimizing for, as by design, it is meant to be intangible. The algorithms are dynamically shifting

and experimenting with the decision makers, making it difficult to interact with them in simple ways.

4. Autonomy and Recommendation Systems

With the hyper-personalization of choice contexts facilitated by the tools of microtargeting and hypernudging, there emerges a dynamic between the user and the algorithm which delicately balances empowerment and manipulation (Dholakia et al., 2020). In recommendation systems, agents are presented with multiple options for choices which resonate with them, while simultaneously being subject to the burdens of avoidance, understanding, and experimentation which keep the motivations of the algorithm ever elusive.

With the “information overload” produced as a consequence of the consumption of a large volume of recommended content(Huang et al., 2020), along with the constantly shifting contexts in recommended content which are designed to capture our attention for prolonged periods of time without deep retention or rumination, there emerges a question of how human autonomy is being impinged. To examine this, I will use Frankfurt and Dworkin’s models of autonomy to analyze such possible impingements to autonomy.

4.1. Obstructions to the execution of second-order volitions

According to Frankfurt, an agent’s decisions are autonomous, as explored in section 2, if their first order desires align with their second order volitions. On the obstruction of second order volitions, as per Frankfurt, the agent’s actions are not an enactment of their autonomous will. Through interactions with recommendation systems, the process of creation and execution of second order volitions are secondary to their first order desires. This obstruction occurs as the result of the design of choice contexts within recommendation systems, in particular, they are designed to appeal to an agent’s first order desires, without the necessary endorsement of their second order desires.

To demonstrate this, recall that in section 3.1, it was explained how the user’s attention is captured through educated predictive guesses which the algorithm makes on how the user’s data-double matches up with various metrics as specified by the recommendation system. Since a key “desire” of the algorithm is user engagement, by the nature of our psychology, the recommended content is a “sensationalized” version of our desires (Yeung, 2017). I will use the term “synthesized desires” to refer to such desires, as they originate from our desires, but are in many ways hyperbolized. As the algorithm is acutely aware of the nuances within our preferences, there is a kaleidoscope of “sensationalized” desires, or synthesized desires, which are repeatedly recommended to us. As it is aware of how to keep our attention though

constantly providing content which entertains us, we over-consume and are over-exposed to these recommendations (Mills, 2022), which increases the enactment of first order desires.

This rapid exposure to multiple alternating contexts through recommendation systems over a prolonged period of time creates an environment for an agent to garner multiple first-order desires without being given the adequate time or the appropriate environment to reflect on whether these desires are endorsed by their second order desires. Importantly, the lack of second order endorsement does not prevent the agent from acting on their first order desires, making some of these desires be at most effective first order desires. As the extent of the agent’s identification with their first order desires strengthens, they increasingly act upon their first-order effective desires rather than second-order volitions, consequently diminishing their autonomy.

If the user is able to overcome these outlined problems and give themselves the proper time and environment to reflect on these first order desires, there is the further problem of conflicts with respect to their synthesized desires. Namely, within the over-saturated choice context presented to them, there increases the probability for them to be exposed to conflicting second order desires. To see this, It is well known that a person’s emotional motivations in general precedes a comprehensive rational structure between their desires (Haidt, 2012; Hume, 2023). As the algorithm enforces multiple synthetic desires, these desires shall resonate more with the user’s pre-existing values and make them more wanting to expand on the further synthesization of those desires. However, as the user gets nudged in these various directions based on contradictory interests and views which they haven’t reflected upon, they may find themselves agreeing with ever-increasing diasporic and cacophonous stances. Given, then, upon a rational reflection of these desires, the user may find their desires have drifted into becoming incompatible.

Subsequently, due to the conflicting nature of these desires, it will be a non-trivial task to decide on which second order desire to act upon. With there being no mechanism to limit how many conflicting desires an algorithm can expose an agent to, and in fact, there being the burden of avoidance which further exacerbates the problem, it is possible that the agent will not choose to enact any of these desires due to psychological factors such as decision fatigue, hindering the enactment of second order volitions. This is a violation of the outlined principles for autonomy, since this impedes the actualization of second order volitions.

To clarify this problem with an example, let us consider a university student called Naya who is pursuing a degree in medicine while interning for the local healthcare center, affording her sparse amounts of expendable time. While scrolling down a short form content platform, she is exposed to a lot of young women crafting and creating beautiful artifacts in their spare time, making her reflect on the fact that she has a deep desire to pursue such a creative outlet herself. However, the longer she scrolls for, the more she realizes that there are a lot of options to pursue within the crafting space. She could pursue embroidery or resin art or painting, or

sewing, or crocheting, among many other niches. Prolonged exposure to repeated alternating synthetic desires keeps her glued to the scrolling process instead of enacting the desires, since picking one hobby now feels limiting to her. Her hobbies, thus, do not come into fruition, impinging on the enactment of her second order volitions.

4.2. The Impingement on Procedural and Substantive Independence

Using Dworkon’s model of autonomy, we get some interesting nuances on other ways recommendation systems may impinge on our autonomy. Leaning on Dworkon’s procedural and substantive independence, we will see that many user’s interaction with recommendation algorithms limit their autonomy.

The interaction between the user and the recommendation algorithm can be thought of as one between two agents, where the algorithm takes the jurisdiction to predict content based off of the user’s data and their interaction with the platform. (Lanzing, 2019) Essentially, the algorithm has taken the responsibility (and hence authority) to curate the experience. Consequently, due to the elusive nature of the algorithm, the user is subject to “rules” of the algorithm which they are not privy to. The (mis)management of this responsibility, paired with the burdens of hypernudging, leads to an impingement of the user’s procedural and substantive independence.

The burden of understanding ensures that a user is not aware of the nature of the recommendation given to them. Hence, they are subject to being susceptible to any motives within the algorithm that do not align with the user’s values or desires. The burden of experimentation shows that the algorithm will without consent subject the user to changes in content, priorities, or even political affiliations, as was the case with Cambridge Analytica (Boldyreva et al., 2018). The user may want to avoid being subject to such a deceptive commodification of their attention, however the burden of avoidance makes it that a user cannot alter this paradigm. With this lack of control over their decision making process, it is reasonable to say that procedural independence has been violated.

Similarly, the user’s substantive independence can also be violated. As elaborated in section 2.2, a user loses their substantive independence if they have rescinded some of their freedom to choose in favor of that of a different person or a collective (such as the government, or their friends). This can be seen rather strikingly in the facebook “mood experiment”, where it was concluded that a user’s facebook feed could effectively succeed in altering their mood. In interacting with the platform, the user effectively and implicitly rescinded their full freedom over their emotions in light of the influence of an external entity, the facebook algorithm(Kramer et al., 2014).

5. Critiques and Comments

In this section, I will engage with some potential gaps in my extrapolation of autonomy with respect to hypernudging and microtargeting in recommendation systems. A critique which may be levied is that the recommendation algorithms may also suggest first order desires which align with our second order desires, consequently aiding in fulfilling second order volitions. For example, it was revealed that the majority of tiktok prefer skincare content created by trained professionals(Irfan et al., 2023). This shows that the users engaged with high quality content possibly in alignment with their second order desires. While this is certainly plausible, this does not eliminate the fact that users might still be subject to the burdens of hypernudging and microtargeting, as outlined in section 3. It is highly plausible that users with a second order desire for skincare will be over-saturated with skincare-related content, possibly from multiple board certified dermatologists, recommending multiple conflicting opinions over similar products. This could make them susceptible to not enacting a second order volition, as explored in section 4.

In a similar vein, it can also be suggested that the burdens of hypernudging, specifically the burden of understanding, can be satisfyingly diminished by gaining a deeper insight on how recommendation algorithms operate (Jones, 2023). This insight might suggest some level of autonomy to the decision making process while interacting within the choice contexts, in particular, increased procedural and substantive independence. However, alleviating some of the burdens of hypernudging does not eliminate the root cause of the reason why nudges and hypernudges are effective. As was explored in this paper, these algorithms target our psychology and desires, and affect us on a subconscious level. Thus, our decision-making process is in the long run exposed to an evolving scenario which shall keep posing a threat to our autonomy.

Another point that may be raised is how autonomy as defined through self-governance might result in an analysis favoring autonomy, in particular, recommendation platforms would enable autonomy due to the freedom of choice given to its users. As any user has the ability to post on many of these platforms, and the content on these platforms gets popular if people find them interesting and interact with them, this would make it more democratic, or self-governing. In other words, the algorithm is contingent on delivering an experience that is set by the user’s preferences. To demonstrate this self-governance, as a response to concerns raised regarding tiktok’s influence on the increasing involvement of youth in the activism for Palestine, the tech giant produced an elaborate press release stating how it was not the result of their algorithm, but the genuine interest of teenagers who were concerned about the situation (The Truth about TikTok Hashtags and Content during the Israel-Hamas War, 2019). While through this lens these platforms do indeed have such a democratic nature, the architecture governed by hypernudging will govern how the content is disseminated and will also choose how the users interact with the content. As elaborated poignantly by Lainzing, hypernudging threatens decisional privacy, affecting our ability to critique our choice contexts within the recommendation systems

(Lanzing, 2019). Thus, this perceived self-governance is mitigated by the outlined analysis. Furthermore, returning to the case of Tiktok’s approach to politics, while Tiktok claimed that it did not aid in the popularization of palestine protests, it is claimed to have created significant impingements in the protests in hong kong, to the extent that it was used by the government to track protester information (Milmo & editor, 2023). Regardless of its preconceived democratic nature, the self-governance of users can be contingent on the choice of the organization mitigating the user’s autonomy.

6. Conclusions

This paper argued how the microtargeting and hypernudging mechanisms of recommendation algorithms pose a threat to the autonomy of users. In particular, it explored how these mechanisms add cognitive burdens to the users of such platforms, inhibiting their ability to enact second order volitions and impinging on their procedural and substantive independence.

While there are some other interesting angles which this problem may have been tackled with, such as considering other forms of autonomy, it remains the case that Frankfurts and Dworkin’s notion of autonomy offers an insightful framework in understanding a user’s evolving experience, and lend themselves well in the analysis of the architecture of hypernudging systems. As our interactions with recommendation algorithms continue to increase as these algorithms are becoming an ever-more popular tool used by large corporations, it is in our best interests to keep in mind how these algorithms may affect our decision-making process, and thus how they are shaping the choices we are making.

Bibliography

- Boldyreva, E. L., Grishina, N. Y., & Duisembina, Y. (n.d.). Chapter 10: Cambridge Analytica: Ethics And Online Manipulation With Decision-Making Process. https://doi.org/10.15405/epsbs.2018.12.02.10

- Bouk, D. (2017). The History and Political Economy of Personal Data over the Last Two Centuries in Three Acts. Osiris (Bruges), 32(1), 85–106. https://doi.org/10.1086/693400

- Dholakia, N., Darmody, A., Zwick, D., Dholakia, R., & Firat, F. (2020). Consumer Choicemaking and Choicelessness in Hyperdigital Marketspaces. Journal of Macromarketing, 41. https://doi.org/10.1177/0276146720978257

- Dworkin, G. (1976). Autonomy and behavior control. The Hastings Center Report, 6(1), 23–28. https://doi.org/10.2307/3560358

- Frankfurt, H. (2001). Freedom of the Will and the Concept of a Person. In Agency and Responsibility (1st ed., pp. 77–91). Routledge. https://doi.org/10.4324/9780429502439-6

- Goldrick, S. (2023, September 12). Get Fresh Music Sunup to Sundown With daylist, Your Ever-Changing Spotify Playlist. Spotify.

https://newsroom.spotify.com/2023-09-12/ever-changing-playlist-daylist-music-for-all-day Haggerty, K., & Ericson, R. (2001). The Surveillant Assemblage. The British Journal of Sociology, 51, 605–622. - Haidt, J. (2012). The righteous mind: Why good people are divided by politics and religion (1st ed.). Pantheon Books,.

Harcourt, B. E. (2015). Exposed: Desire and disobedience in the digital age. Harvard University Press. - Huang, Y., Zhou, L., Zeng, Z., Duan, L., & Wang, J. (2020). An Empirical Study on the Phenomenon of Information Narrowing in the Context of Personalized Recommendation.

- Journal of Physics: Conference Series, 1631(1), 012109. https://doi.org/10.1088/1742-6596/1631/1/012109

- Hume, D. (2023). A Treatise of Human Nature (A. M. Coventry, Ed.). Broadview Press. Irfan, B., Yasin, I., Yaqoob, A., Irfan, B., Yasin, I., & Yaqoob, A. (2023). Navigating Digital

- Dermatology: An Analysis of Acne-Related Content on TikTok. Cureus, 15(9). https://doi.org/10.7759/cureus.45226

- Jones, C. (2023). How to train your algorithm: The struggle for public control over private audience commodities on Tiktok. Media, Culture & Society, 45(6), 1192–1209. https://doi.org/10.1177/01634437231159555

- Kahneman, D. (2011). Thinking, Fast and Slow. Doubleday Canada.

- Koren, Y., Bell, R., & Volinsky, C. (2009). Matrix Factorization Techniques for Recommender Systems. Computer, 42(8), 30–37. https://doi.org/10.1109/MC.2009.263

- Kramer, A. D. I., Guillory, J. E., & Hancock, J. T. (2014). Experimental evidence of massive-scale emotional contagion through social networks. Proceedings of the National Academy of Sciences, 111(24), 8788–8790. https://doi.org/10.1073/pnas.1320040111

- Lanzing, M. (2019). “Strongly Recommended” Revisiting Decisional Privacy to Judge Hypernudging in Self-Tracking Technologies. Philosophy & Technology, 32(3), 549–568. https://doi.org/10.1007/s13347-018-0316-4

- Mills, S. (2022). Finding the ‘nudge’ in hypernudge. Technology in Society, 71, 102117-. https://doi.org/10.1016/j.techsoc.2022.102117

- Milmo, D., & editor, D. M. G. technology. (2023, June 7). Chinese communist party ‘accessed Hong Kong protesters’ TikTok data’. The Guardian.

- https://www.theguardian.com/technology/2023/jun/07/communist-party-accessed-hong-k ong-protesters-tiktok-data-former-executive-says

- Plath, S. (with Ames, L., & McCullough, F.). (2005). The bell jar (First Harper Perennial Modern Classics edition.). HarperPerennial.

- Seaver, N. (2022). Computing taste: Algorithms and the makers of music recommendation. The University of Chicago Press.

- Thaler, R. H. (with Sunstein, C. R.). (2021). Nudge: The final edition (Updated edition.). Penguin Books, an imprint of Penguin Random House LLC.

- The truth about TikTok hashtags and content during the Israel-Hamas war. (2019, August 16). Newsroom | TikTok. https://newsroom.tiktok.com/en-us/the-truth-about-tiktok-hashtags-and-content-during-th e-israel-hamas-war

- Yeung, K. (2017). “Hypernudge”: Big Data as a mode of regulation by design. Information, Communication & Society, 20(1), 118–136. https://doi.org/10.1080/1369118X.2016.1186713

- Hanna Barakat & Cambridge Diversity Fund / https://betterimagesofai.org / https://creativecommons.org/

licenses/by/4.0/